After taking into account feedback from members of

disability communities, Apple has created new tools for improved accessibility

for its devices. These have been designed to specifically facilitate those with speech,

hearing, vision, and cognitive impairments. Among the features that Apple has

previewed, the one that stands out the most is called Personal Voice, designed for

people who may lose their ability to speak. It allows such users to create their

own synthesized voice that resembles their own, that they can then use to talk

to family and friends.

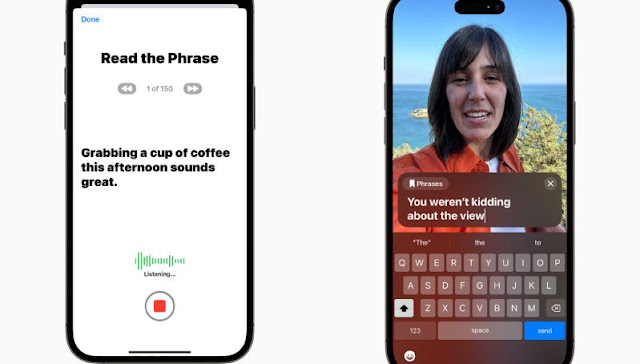

Users will be able to create their Personal Voice by reading

out loud a list of text prompts for 15 minutes on audio on their iPhone or

iPad. Together with the Live Speech feature, Personal Voice will be able to communicate

with others by reading out the text typed by the user in Live Speech.

Another functionality, called Assistive Access, is created for people with cognitive disabilities. It streamlines Apple’s core apps to provide a non-overwhelming app experience to users by filtering out just the essential features of the apps. For example, it could highlight high contrast buttons or show large text labels. The apps that Assistive Access provides modified versions of include Phone, FaceTime, Messages, Camera, Photos, and Music.

Next, Apple is adding a new detection mode in Magnifier. This

will assist people with low vision or blindness to interact with physical

objects with numerous text labels. When a user points their device’s

camera at a label, the feature will read the detected text aloud by following

the user’s movement of the finger along the labels.

In addition to these features, Apple is bringing new

accessibility tools in Mac as well. These include a text size adjustment option

in Finder, Messages, Mail, Calendar, and Notes; the ability to pause GIFs in

Safari and Messages, customize Siri’s speech rate, and use Voice Control for

phonetic suggestions during text editing.